THE FOUNDATIONS OF COGNITION

3.7

Drooling Dogs, Superstitious Pigeons and Smartphone-Junkies

How does experience shape behavior? This step introduces classical conditioning, trial-and-error learning, and operant conditioning – foundational mechanisms through which behavior is modified by experience across the animal kingdom. Along the way, we’ll see how these learning systems can also give rise to surprising and sometimes unexpected behaviors.

Reflexes and fixed action patterns operate in the moment. They require no memory of the past and hold no expectation of the consequences of action. In an ever-changing world, however, learning evolved to bridge this temporal gap – linking causes to consequences, and signals to outcomes.

Most people have heard of Pavlov’s famous experiment, in which a dog learned to salivate at the sound of a bell alone. This classic study illustrates classical conditioning, a form of associative learning in which an initially neutral stimulus becomes linked to a behavioral response through repeated pairing.

Over time, the bell becomes a predictor of food and elicits salivation even in its absence.

Associative learning is one of the most foundational learning principles. It allows animals, across species, to adjust their behavior more flexibly. For those unfamiliar with the experiment – or as a quick refresher – you can watch this short video: https://www.youtube.com/watch?v=asmXyJaXBC8.

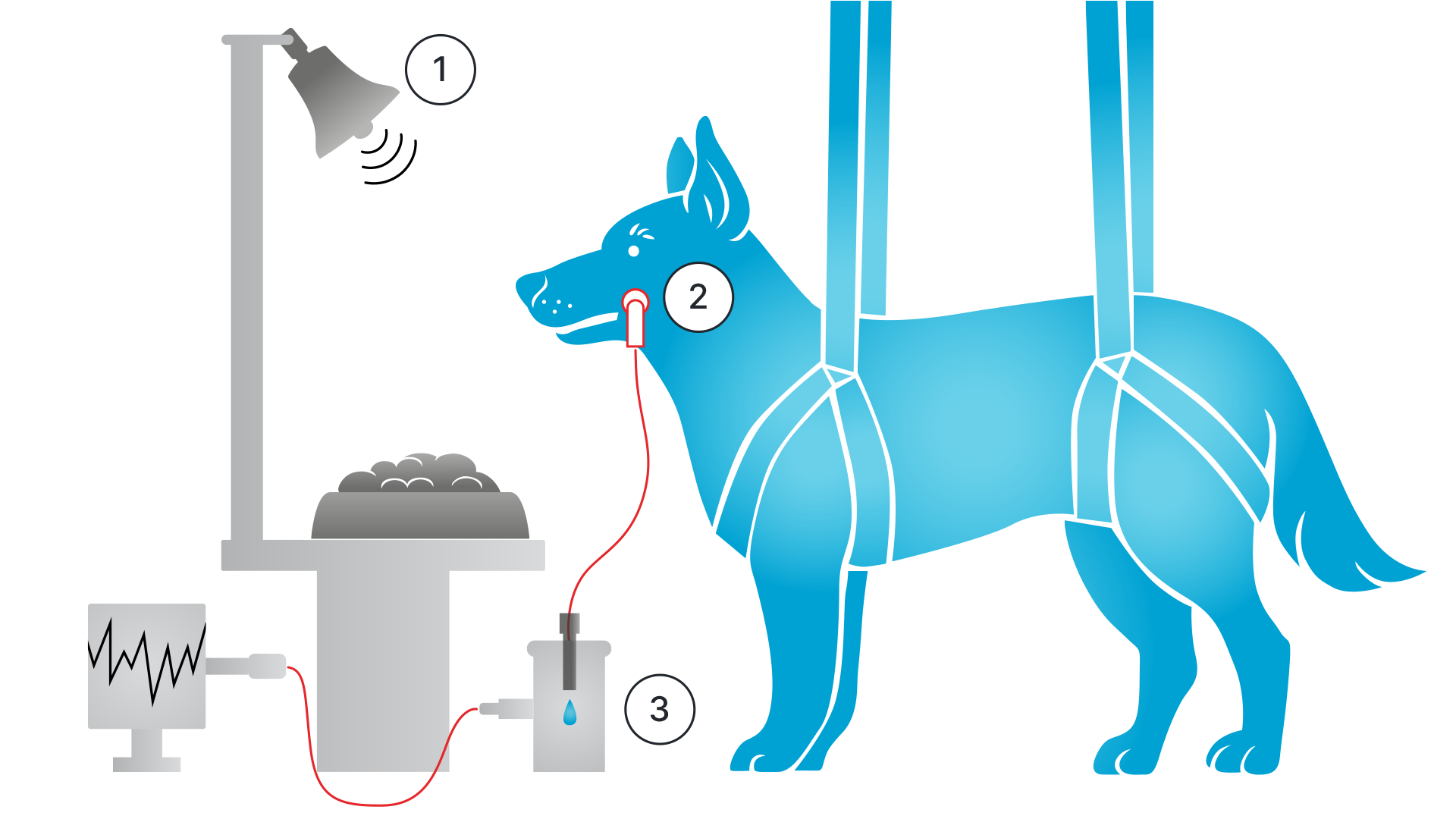

Pavlov’s experimental setup.1

1. A bell rings, and moments later, food arrives.

2. After repeated pairings, the dog begins to salivate at the sound alone: a conditioned response.

3. The salivary reflex was measured via a fistula, a small tube that collected the saliva.

Shortly after Pavlov, Edward Thorndike expanded our understanding of learning through his puzzle box experiments. In these experiments, a hungry cat was confined in a cage and had to escape in order to reach food placed outside.2

E.J. Thorndike

Public domain, via Wikimedia Commons

Thorndike observed that, at first, the cat tried many different actions to get out of the cage. Over time, however, behaviors that did not lead to escape – ineffective behaviors – were gradually reduced. In contrast, effective behaviors that helped the cat escape, such as stepping on a lever, occurred more quickly.

From these observations, Thorndike formulated what he called the Law of Effect: behavioral responses that are closely followed by a satisfying outcome are more likely to become established and to occur again in response to the same stimulus. Importantly, Thorndike argued that the cats did not understand the situation or plan their actions. Instead, they learned through trial and error which behaviors worked – and those behaviors were more likely to be repeated.

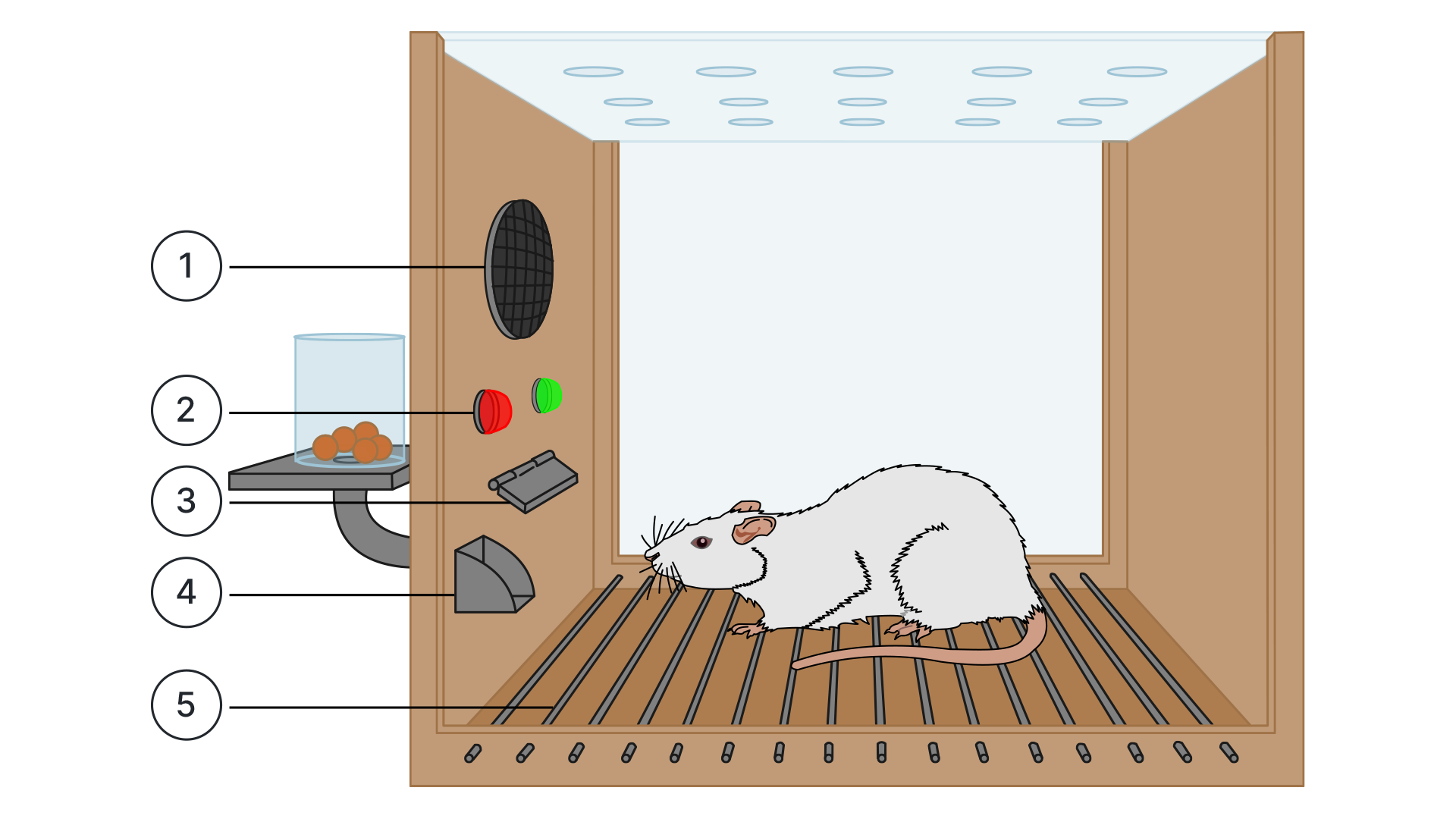

Building on this work, B. F. Skinner transformed the puzzle-box concept into what became known as the operant conditioning chamber, laying the foundation for much of experimental behavioral research and reinforcement learning. An operant conditioning chamber allows researchers to observe and manipulate behavior, and to study how an animal learns certain actions – such as pressing a lever – in response to specific conditions. For example, when the correct action is performed, the animal may receive positive reinforcement in the form of food. In other cases, behavior can be shaped by punishment, such as mild electrical shocks, to discourage incorrect responses.

Skinner box

1. Loudspeakers

2. Lights

3. Response lever

4. Food dispenser

5. Electrified grid

Adapted from AndreasJS / Vector: Pixelsquid, CC BY-SA 3.0, via Wikimedia Commons

Taken together, these foundational behaviorist experiments greatly advanced our understanding of the basic mechanisms of learning. At the center of this research is the concept of reinforcement – a primary means by which behavior is shaped through associations between actions and their consequences. Reinforcement occurs in two main forms: positive reinforcement, where a rewarding stimulus follows a behavior, and negative reinforcement, which involves the removal or avoidance of an aversive stimulus. But what can we actually learn from these experiments about cognition?

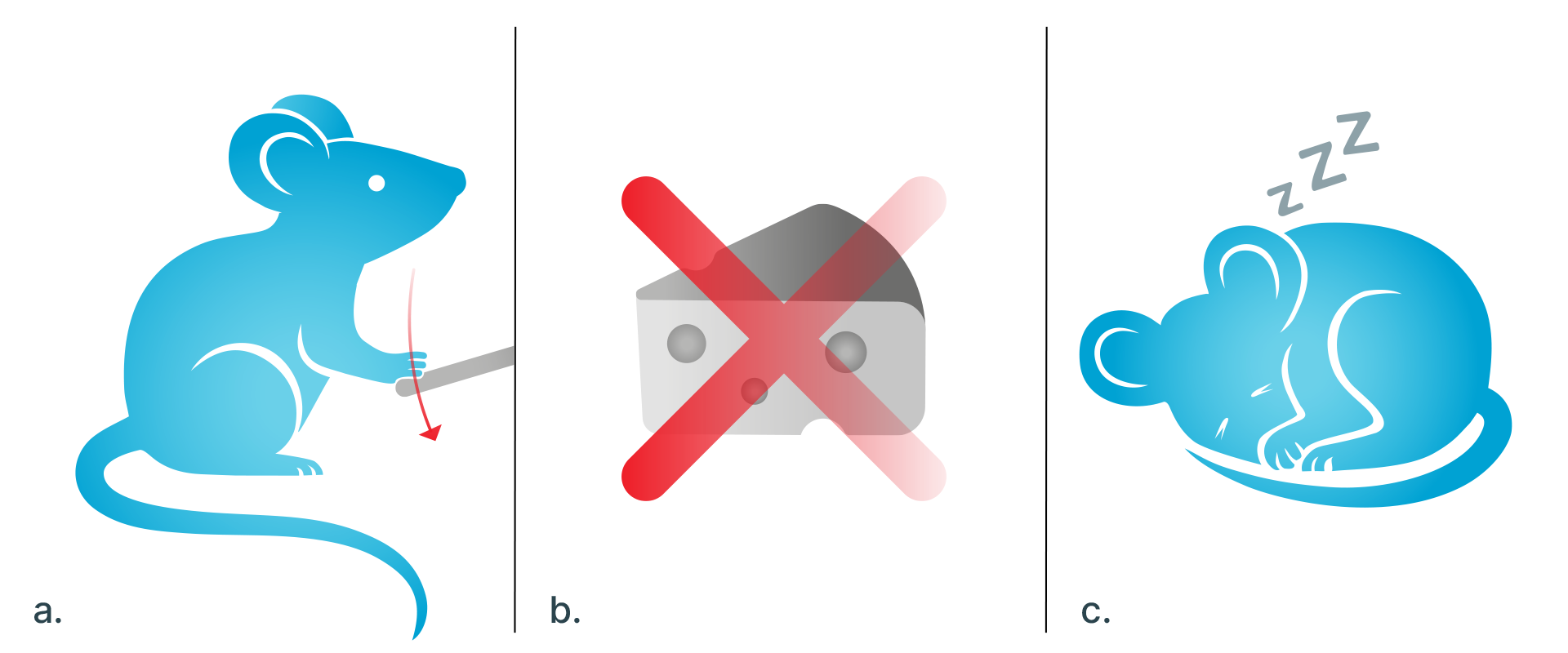

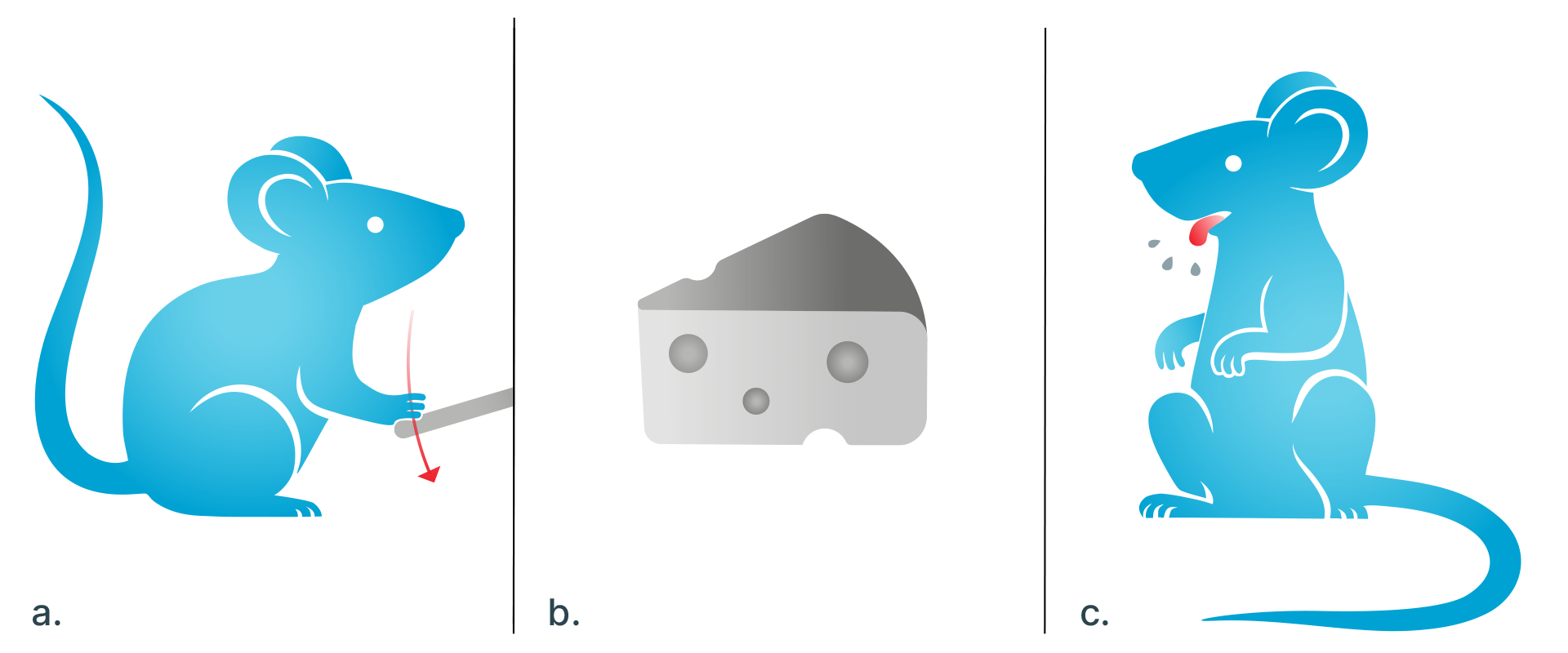

Quite a lot, actually. Imagine three mice, each in a slightly different version of an operant conditioning chamber, trying to obtain food by pressing a button:

MOUSE 1

a) Mouse 1 is hungry and presses the button.

b) No food is delivered.

c) After repeated attempts, it stops trying. It learns that the button has no relevance.

MOUSE 2

a) Mouse 2 is also hungry, but in its chamber it has the opposite experience.

b) Every time it presses the button, food is delivered. The more it presses, the more food it receives.

c) It learns that pressing the button reliably leads to food.

MOUSE 3

a) Mouse 3 is hungry as well, but the rules of its chamber are different. It presses the button just like the other two, but food is delivered only some of the time.

b) Sometimes nothing happens; sometimes a reward appears.

c) The outcome is unpredictable.

What we observe is that Mouse 3 learns to press the button compulsively. This stands in contrast to Mouse 1, which gives up because no food ever appears, and Mouse 2, which presses only when hungry because food is always delivered. For Mouse 3, the reward is unpredictable: sometimes food appears, sometimes it does not. This pattern of intermittent reinforcement creates uncertainty, driving repeated, even compulsive, button-pressing – regardless of hunger.

That is interesting – but importantly: the same can be observed in humans. Although our cognition may often be more complex than that of dogs or rats, the same principles suffice to explain many of our behaviors.

Ask yourself: How many times have you looked at your phone today?

And when you did, was what you saw good news, bad news, or a mix?

Probably, it was a mix of both. And that is precisely why many of us check our phones far more often than necessary. Every time you unlock your phone, you face a range of possible outcomes. It might be a kind message from a friend, or a stressful email from your boss. It could be praise – or an unexpected bill. Or nothing at all. This very unpredictability keeps us coming back – much like Mouse 3. It is the possibility of a reward, created by intermittent reinforcement, that keeps us hooked.

What This Shows

Many human behaviors – most strikingly those that appear irrational or compulsive – may already be explained by basic associative learning mechanisms.

Simple principles of operant conditioning may be at work far more often than we like to assume. In mice, pigeons, cats, dogs – and in us.

Fun Facts

Superstitious Pigeons

In 1948, B. F. Skinner noticed something unexpected in his pigeon experiments. The pigeons began to perform strange, repetitive actions – such as spinning in circles or swinging their heads – just before food was delivered.

Although the food appeared at fixed time intervals, regardless of the birds’ behavior, the pigeons acted as if their movements caused the food to arrive. Skinner suggested that they were attempting to influence the timing of food delivery and had developed a form of superstition. He further suspected that humans might engage in similar behaviors – believing that certain actions produce outcomes even when no real causal connection exists.

While this idea does not explain all forms of superstition, it sheds light on a surprising range of everyday behaviors that follow similar logic. Examples include wearing a “lucky” shirt, performing small rituals before important events, repeatedly refreshing email or social media feeds in the hope of new messages, or persistently pressing elevator or crosswalk buttons – even when these actions have no actual effect.

Author: Fabian Müller

References

Pavlov, I. P. (1927). Conditioned reflexes: An investigation of the physiological activity of the cerebral cortex. Oxford University Press.

Skinner, B. F. (1948). “Superstition” in the pigeon. Journal of Experimental Psychology, 38(2), 168–172.

Thorndike, E. L. (1898). Animal intelligence: An experimental study of the associative processes in animals. Psychological Review: Monograph Supplements, 2(4), i–109.

Clark’s Classroom, M. (n.d.). The research experiment that saved me from scrolling (& learn to love reading again) [Video]. YouTube: https://www.youtube.com/watch?v=z4ePPCeUZTA

Hirsch, J. (1970). The Harvard Law of Animal Behavior: “Under the most carefully controlled experimental conditions the animals do as they damn please.”

In N. Bischof (Ed.), Aristoteles, Galilei, Kurt Lewin – und die Folgen (p. 89). Universität München.

-

Of course, this experimental setup was not exactly ecological. The dogs’ behavioral repertoire was so heavily constrained that their responses likely bore little resemblance to how they would act in a natural setting. In free movement, for instance, dogs might jump, bark, or run toward the food when the bell rang. Controlling the experimental conditions (justified or intended to eliminate disruptive sources of variance) ended up quite literally restraining the animals’ behavior. This is quite ironically captured in what became known, as the Harvard Law of Animal Behavior: “Under the most carefully controlled experimental conditions, the animals do as they damn please.” Attributed to Jerry Hirsch, colleague of Skinner In N. Bischof (Ed.), Aristoteles, Galilei, Kurt Lewin – und die Folgen (p. 89). Universität München Press. ↩

-

You find a video showing this experiment here: https://www.youtube.com/watch?v=fanm–WyQJo ↩